IDUN HPC cluster provides access to LLMs ( running locally ).

Do you want web interface to work with LLM modes? Visit https://gpt.ntnu.no and read more here https://i.ntnu.no/wiki/-/wiki/Norsk/GPT+NTNU

Do you need LLM API for research or development, or access to alternative web interfaces? The this page is for you.

How to access

IDUN LLM models are available from NTNU networks or NTNU VPN.

You can use IDUN LLM models via Desktop AI applications like:

- VS Code with extensions (Cline, Roo Code, Continue, Kilo Code, ... )

- ZED

- OpenCode

- Claude Code

- Witsy

- Anythin LLM

- Cherry Studio

Web chat/agent:

- Open WebUI: https://idun-llm.hpc.ntnu.no

- LibreChat: https://ai.hpc.ntnu.no/chat/

Login with your NTNU short username.

iPhone and Android applications like Apollo (tested)

API key:

We create personal API key for each user. Send e-mail to: help@hpc.ntnu.no

See more details and examples in this document.

Rate Limits

We are launching gpt.ntnu.no and it is using the same LLM modes. API users can make LLMs slow.

Please do not run heavy research LLM workloads during working hours. Best time for run big jobs:

- works days between 18:00 – 06:00 (evening, night)

- weekends without time limits

3 LLM models with rate limits now:

- openai/gpt-oss-120b

- moonshotai/Kimi-K2.6

- mistralai/Mistral-Large-3-675B-Instruct-2512-NVFP4

Rate limits (defaults):

- TPM: 60000 (tokens per minute)

- RPM: 20 (requests per minute)

Contact us if you need to change your rate limits.

What about sensitive data?

All LLM models on IDUN are running locally and data is not leaving NTNU network.

- API calls go directly into the model, and they are not stored. (vLLM is started with "--no-enable-prefix-caching" and LLM proxy is started with "cache: False" option)

- Use API with desktop AI applications.

- Web interfaces Open WebUI and LibreChat have feature "Temporary Chat". These chats are not saved. "Temporary Chat" sis not enabled by default so users’ questions and answers are stored for user convenience. And user can delete saved conversations manually.

- There is a plan to officially approve for "røde data", but we have not done the formal assessment yet.

Temporary chat toggle is located in the top right corner:

Usage statistics: https://ai.hpc.ntnu.no/stats

LLM models

Updated: 2026-04-30

| Model name | Input format | Created by | Country | License | Parameters | Context Window |

|---|---|---|---|---|---|---|

| (TEST) google/gemma-4-31B-it (FP8 by RedHatAI) | image and text | USA | Apache 2.0 | 31B | 262144 | |

| (TEST) mistralai/Devstral-Small-2-24B-Instruct-2512 | image and text | Mistral AI SAS | France | Apache 2.0 | 24B | 262144 |

| mistralai/Mistral-Large-3-675B-Instruct-2512-NVFP4 | image and text | Mistral AI SAS | France | Apache 2.0 | 675B | 294912 |

| moonshotai/Kimi-K2.6 | image and text | Moonshot AI | China | Modified MIT | 1000B | 262144 |

| NorwAI/NorwAI-Magistral-24B-reasoning | text | NorwAI, NTNU | Norway | NorLLM License by NTNU | 24B | 40960 |

| openai/gpt-oss-120b | text | OpenAI | USA | Apache 2.0 | 117B | 131072 |

| Qwen/Qwen3-Embedding-8B | text | Alibaba Cloud | China | Apache 2.0 | 8B | 40960 |

| Qwen/Qwen3.5-122B-A10B-FP8 | image and text | Alibaba Cloud | China | Apache 2.0 | 122B | 262144 |

| (TEST) Qwen/Qwen3.6-27B-FP8 | image and text | Alibaba Cloud | China | Apache 2.0 | 27B | 262144 |

| zai-org/GLM-4.7-FP8 | text | Z.ai | China | MIT | 358B | 202752 |

NOTE: context window = max_input_tokens + max_output_tokens. We configure 66% / 33%. For example for model mistralai/Mistral-Large-3-675B-Instruct-2512-NVFP4 with context window 294912 use:

- max_input_tokens: 196608

- max_output_tokens: 98304

NOTE 2: models marked as (TEST) are temporary and available for evaluation.

Click to show "What model developers write about their models"

| Model ID | Comments |

|---|---|

| mistralai/Mistral-Large-3-675B-Instruct-2512-NVFP4 | The Mistral Large 3 Instruct model offers the following capabilities: - Vision: Enables the model to analyze images and provide insights based on visual content, in addition to text. - Multilingual: Supports dozens of languages, including English, French, Spanish, German, Italian, Portuguese, Dutch, Chinese, Japanese, Korean, Arabic. - System Prompt: Maintains strong adherence and support for system prompts. - Agentic: Offers best-in-class agentic capabilities with native function calling and JSON outputting. Read about this model: https://huggingface.co/mistralai/Mistral-Large-3-675B-Instruct-2512-NVFP4 https://mistral.ai/news/mistral-3 |

| openai/gpt-oss-120b | Highlights: - Configurable reasoning effort: Easily adjust the reasoning effort (low, medium, high) based on your specific use case and latency needs. - Full chain-of-thought: Gain complete access to the model’s reasoning process, facilitating easier debugging and increased trust in outputs. It’s not intended to be shown to end users. - Fine-tunable: Fully customize models to your specific use case through parameter fine-tuning. - Agentic capabilities: Use the models’ native capabilities for function calling, web browsing, Python code execution, and Structured Outputs. Read about this model: https://huggingface.co/openai/gpt-oss-120b https://openai.com/index/introducing-gpt-oss/ |

| zai-org/GLM-4.7-FP8 | GLM-4.7, your new coding partner, is coming with the following features: - Core Coding: multilingual agentic coding and terminal-based tasks. GLM-4.7 also supports thinking before acting, with significant improvements on complex tasks in mainstream agent frameworks such as Claude Code, Kilo Code, Cline, and Roo Code. - Vibe Coding: GLM-4.7 takes a big step forward in improving UI quality. It produces cleaner, more modern webpages and generates better-looking slides with more accurate layout and sizing. - Tool Using: GLM-4.7 achieves significantly improvements in Tool using. - Complex Reasoning: GLM-4.7 delivers a substantial boost in mathematical and reasoning capabilities. Read about this model: https://huggingface.co/zai-org/GLM-4.7-FP8 https://z.ai/blog/glm-4.7 |

| moonshotai/Kimi-K2.6 | Key Features: - Native Multimodality: Pre-trained on vision–language tokens, K2.5 excels in visual knowledge, cross-modal reasoning, and agentic tool use grounded in visual inputs. - Coding with Vision: K2.5 generates code from visual specifications (UI designs, video workflows) and autonomously orchestrates tools for visual data processing. - Agent Swarm: K2.5 transitions from single-agent scaling to a self-directed, coordinated swarm-like execution scheme. It decomposes complex tasks into parallel sub-tasks executed by dynamically instantiated, domain-specific agents. Read about this model: https://huggingface.co/moonshotai/Kimi-K2.6 https://www.kimi.com/blog/kimi-k2-6.html |

| Qwen/Qwen3.5-122B-A10B-FP8 | Qwen3.5 represents a significant leap forward, integrating breakthroughs in multimodal learning, architectural efficiency, reinforcement learning scale, and global accessibility to empower developers and enterprises with unprecedented capability and efficiency. Read about this model: https://huggingface.co/Qwen/Qwen3.5-122B-A10B-FP8 https://qwen.ai/blog?id=qwen3.5 |

| NorwAI/NorwAI-Magistral-24B-reasoning | Reasoning language model, by NowAI research center at Norwegian University of Science and Technology (NTNU) in collaboration with Schibsted, NRK, VG and the National Library of Norway. The model is designed to adapt its reasoning depth dynamically based on the type and complexity of the user’s question: - Completion mode for straightforward answers without reasoning - Short-thinking mode for moderately difficult questions requiring some reasoning - Long-thinking mode for more complex questions requiring deeper reasoning Read about this model: https://huggingface.co/NorwAI/NorwAI-Magistral-24B-reasoning |

| Qwen/Qwen3-Embedding-8B | Highlights - support 100+ Languages, including: Norwegian Bokmål, Norwegian Nynorsk. This includes various programming languages, and provides robust multilingual, cross-lingual, and code retrieval capabilities. - long-text understanding - reasoning skills - The embedding model has achieved state-of-the-art performance across a wide range of downstream application evaluations. Read about this model: https://huggingface.co/Qwen/Qwen3-Embedding-8B https://github.com/QwenLM/Qwen3-Embedding |

Hardware:

Server with 8 x B300 288GB (NVFP4 support):

4 x B300 - moonshotai/Kimi-K2.6

2 x B300 - mistralai/Mistral-Large-3-675B-Instruct-2512-NVFP4

2 x B300 - zai-org/GLM-4.7-FP8Server with 8 x H100 80GB

2 x H100 - gpt-oss-120b

2 x H100 - Qwen/Qwen3.5-122B-A10B-FP8

1 x H100 - NorwAI/NorwAI-Magistral-24B-reasoning

1 x H100 - mistralai/Devstral-Small-2-24B-Instruct-2512 (TEST)

1 x H100 - google/gemma-4-31B-it (TEST) model behind is RedHatAI/gemma-4-31B-it-FP8-block

1 x H100 - Qwen/Qwen3.6-27B-FP8 (TEST)Server with 1 x A100 40GB:

1 x A100 - Qwen/Qwen3-Embedding-8B and intfloat/multilingual-e5-large-instructWe use "--kv-cache-dtype fp8" only in Gemma 4 because of the bug and in the Qwen3.6-27B-FP8 because we want to provide full context window on 80GB VRAM GPU.

Gemma 4 has several unresolved issue with vLLM in production:

- Gemma 4 hangs with long context: https://github.com/vllm-project/vllm/issues/40002 # --kv-cache-dtype fp8 solve this issue for now

- Gemma 4 infinite loop answer: https://github.com/vllm-project/vllm/issues/40080 # it happens with original model, but it does not happen with RedHatAI/gemma-4-31B-it-FP8-block

Web interface (Open WebUI)

Open WebUI: https://idun-llm.hpc.ntnu.no

LibreChat: https://ai.hpc.ntnu.no/chat/

API (OpenAI Compatible)

Send email and we'll generate new personal API token for you: help@hpc.ntnu.no

https://llm.hpc.ntnu.no is a LLM gateway to access all LLMs on IDUN HPC cluster. It provides consistent openai compatible API.

You can see all endpoints on this web page https://llm.hpc.ntnu.no/

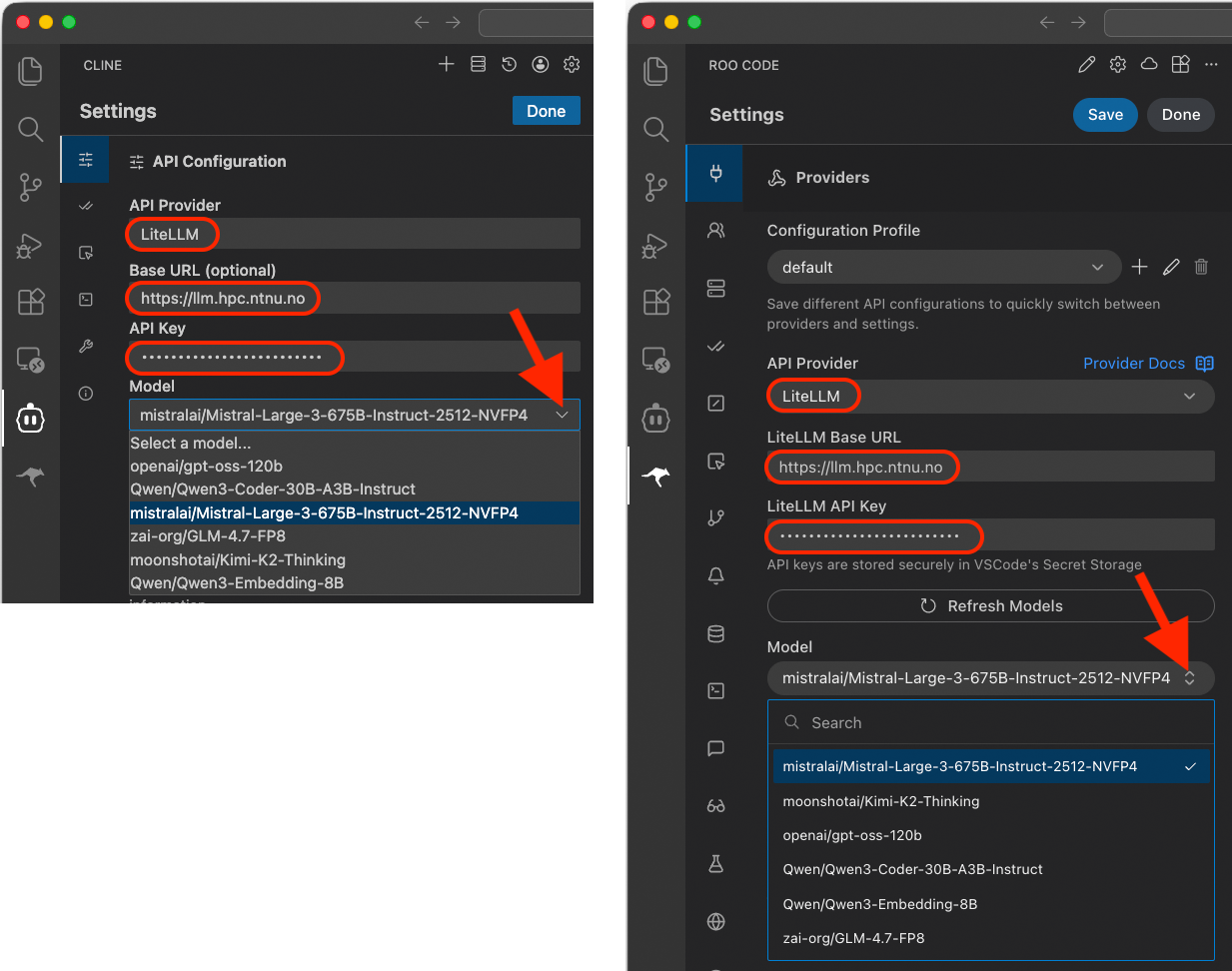

Visual Studio Code - extensions

There are several popular open source VS Code extensions:

- Cline (recommended)

- Roo Code

- Kilo Code

- Continue

NOTE: Current experience with Roo Code. Extension is getting updates every 1-3 days. And that cases somtimes issue. Things that worked yesterday can stop working today. This was my experience with Roo Code and Mistral models. You can downgrade extension and stop auto update.

Base URL: https://llm.hpc.ntnu.no/

API provider: LiteLLM or OpenAI compatible

API Key: send email to help@hpc.ntnu.no to create new.

NOTE 1: Some LLM models works better (no errors) with Cline some are better with Roo Code.

NOTE 2: You can use API Provider "Open API compatible" or "LiteLLM". In most cases they are the same. But for example with Mistral Large 3 in Roo Code "Open API compatible" provider shows sometimes errors. This can be fixed in the next Roo Code update release.

Comparing LLM models undenstanding and coding capabilities. Text prompt (Source https://z.ai/blog/glm-4.7):

Design a richly crafted voxel-art environment featuring an ornate pagoda set within a vibrant garden.

Include diverse vegetation—especially cherry blossom trees—and ensure the composition feels lively, colorful, and visually striking.

Use any voxel or WebGL libraries you prefer, but deliver the entire project as a single, self-contained HTML file that I can paste and open directly in Chrome.

Desktop AI applications

BYOK (Bring Your Own Keys) AI applications. Test several applications. Looking for features:

- Application can connect to LLM models via API on IDUN

- Open Source

- Can work with local documents

- Can use local web search

- Can send images for recognition

These applications was tested:

| Desktop AI application | License | Comment |

|---|---|---|

| Open WebUI | permissive license with branding protection | It can be installed on a local computer. And accessed via web browser http://localhost:8080. Uses embedding model to work with local documents. |

| Witsy | AGPL-3.0 license | Uses embedding model to work with local documents. |

| Cherry Studio | AGPL-3.0 license | Uses embedding model to work with local documents. |

| Anything LLM | MIT License | Uses embedding model to work with local documents. |

| ZED | Open source | Zed is a minimal code editor with AI support out of the box. It is designed to work with code. It is not using embedding model but it understand question and finds answer in local files with LLM model. |

| Visual Studio Code | MIT license | VS Code is a code editor. It can connec to LLM API with extensions like Cline, Roo Code, Kilo Code.... It is not using embedding model but it understand question and finds answer in local files with LLM model. |

Click to show: Configuration example local Open WebUI

Install guide: https://docs.openwebui.com/getting-started/quick-start

Settings location: Click User icon > Admin panel > Settings

Screenshot main interface http://localhost:8080:

Connect to IDUN LLMs:

Configure search engine:

Configure Embedding model for local documents:

Add local documents to a knowledge base:

Add image generation model:

Click to show: Configuration example Witsy

Main interface:

Connect to IDUN LLM

Add embedding model for local documents:

Click to show: Configuration example Cherry Studio

Main interface:

Connect to IDUN LLM

Configure web search engine:

Add directory to knowledge base:

Click to show: Configuration example Anything LLM

Main interface

Connect to IDUN LLM:

Add embedding model:

Configure web search:

Add directory to knowledge base:

Click to show: Configuration example ZED

Main interface:

Connect IDUN LLM:

test...

iPhone (iOS) and Android application

Example configuration application Apollo:

API examples with curl and Python

curl

Test API token - get model list:

curl https://llm.hpc.ntnu.no/v1/models -H "Authorization: Bearer sk-..MY..PESONAL..API..TOKEN.."Test chat response:

curl https://llm.hpc.ntnu.no/v1/chat/completions -H "Authorization: Bearer sk-..MY..PESONAL..API..TOKEN.." -H "Content-Type: application/json" -d '{

"model": "openai/gpt-oss-120b",

"messages": [

{"role": "user", "content": "Who are you?"}

]

}'Example with curl command - embedding:

curl https://llm.hpc.ntnu.no/v1/embeddings -H "Authorization: Bearer sk-..MY..PESONAL..API..TOKEN.." -H "Content-Type: application/json" -d '{

"model": "Qwen/Qwen3-Embedding-8B",

"input": ["hello world", "this is another sentence"]

}'Python - open

This example will use Python module openai. First create Python virtual environment and install openai module:

python3 -m venv venv-openai

source venv-openai/bin/activate

pip install openaiCreate file chat-tools.py with code example with tool calling:

import openai

import json

import datetime

client = openai.OpenAI(

base_url="https://llm.hpc.ntnu.no/v1",

api_key="sk-..MY..PESONAL..API..TOKEN.."

)

def get_current_time():

current_datetime = datetime.datetime.now()

return f"Current Date and Time: {current_datetime}"

tools = [

{

"type": "function",

"function": {

"name": "get_current_time",

"description": "Get current date and time"

},

}

]

response = client.chat.completions.create(

model="openai/gpt-oss-120b",

messages=[{"role": "user", "content": "What's the time right now?"}],

tools=tools

)

# Process the response

response_message = response.choices[0].message

if response_message.tool_calls:

for tool_call in response_message.tool_calls:

function_name = tool_call.function.name

function_args = json.loads(tool_call.function.arguments)

if function_name == "get_current_time":

time_info = get_current_time()

print(f"Tool call executed: {function_name}() -> {time_info}")

else:

print(f"Unknown tool call: {function_name}")

else:

print(f"Model response (no tool call): {response_message.content}")Example output:

$ python3 chat-tools.py

Tool call executed: get_current_time() -> Current Date and Time: 2025-12-29 12:40:34.581986Python - litellm

Example to with Python module litellm. First create Python virtual environment and install litellm module:

python -m venv venv-litellm

source venv-litellm/bin/activate

pip install litellmSet environment variables:

export LITELLM_MODEL=openai/openai/gpt-oss-120b

export LITELLM_API_BASE=https://llm.hpc.ntnu.no/v1

export LITELLM_API_KEY=sk-v...MY...API...KEY...QCreate script file question.py:

import os

import litellm

MODEL = os.getenv("LITELLM_MODEL")

API_BASE = os.getenv("LITELLM_API_BASE")

API_KEY = os.getenv("LITELLM_API_KEY")

response = litellm.completion(

model=MODEL,

messages=[

{"role": "system", "content": "You are a helpful assistant."},

{"role": "user", "content": "Where is located Nidarosdomen?"}

],

api_base=API_BASE,

api_key=API_KEY,

temperature=0,

drop_params=True,

)

print("### RESPONSE ###")

print(response)

print("### CONTENT ###")

print(response.choices[0].message.content)

print("### REASONING ###")

print(response.choices[0].message.reasoning_content)Example output:

$ python question.py

### RESPONSE ###

ModelResponse(id='chatcmpl-b278f109ed072fe8', created=1779180464, model='openai/gpt-oss-120b', object='chat.completion', system_fingerprint=None, choices=[Choices(finish_reason='stop', index=0, message=Message(content='Nidarosdomen, also known as Nidaros Cathedral, is located in the city of **Trondheim** in central **Norway**. It sits on the banks of the River Nidelva, right in the historic center of Trondheim, near the old city gate (Munkholmen) and the Archbishop?s Palace. The cathedral is a major landmark and pilgrimage site, built on the burial place of Saint\u202fOlav, Norway?s patron saint.', role='assistant', tool_calls=None, function_call=None, reasoning_content='The user asks: "Where is located Nidarosdomen?" They likely want location of Nidaros Cathedral (Nidarosdomen). It\'s in Trondheim, Norway. Provide answer.', provider_specific_fields={'refusal': None, 'reasoning': 'The user asks: "Where is located Nidarosdomen?" They likely want location of Nidaros Cathedral (Nidarosdomen). It\'s in Trondheim, Norway. Provide answer.', 'reasoning_content': 'The user asks: "Where is located Nidarosdomen?" They likely want location of Nidaros Cathedral (Nidarosdomen). It\'s in Trondheim, Norway. Provide answer.'}), provider_specific_fields={})], usage=Usage(completion_tokens=143, prompt_tokens=88, total_tokens=231, completion_tokens_details=None, prompt_tokens_details=None), service_tier=None)

### CONTENT ###

Nidarosdomen, also known as Nidaros Cathedral, is located in the city of **Trondheim** in central **Norway**. It sits on the banks of the River Nidelva, right in the historic center of Trondheim, near the old city gate (Munkholmen) and the Archbishop?s Palace. The cathedral is a major landmark and pilgrimage site, built on the burial place of Saint?Olav, Norway?s patron saint.

### REASONING ###

The user asks: "Where is located Nidarosdomen?" They likely want location of Nidaros Cathedral (Nidarosdomen). It's in Trondheim, Norway. Provide answer.OpenCode - configuration example

Instead of hard coding your API key in to the configuration file add your API key into the environment variables with command:

export NTNU_API_KEY=sk-...Personal...API.KEY...Create config file: ~/.config/opencode/config.json

{

"provider": {

"idun-llm": {

"npm": "@ai-sdk/openai-compatible",

"name": "NTNU LLM",

"options": {

"baseURL": "https://llm.hpc.ntnu.no/v1",

"apiKey": "{env:NTNU_API_KEY}"

},

"models": {

"Qwen/Qwen3.5-122B-A10B-FP8": {

"name": "Qwen3.5 122B A10B FP8",

"modalities": {

"input": ["text", "image"],

"output": ["text"]

}

},

"mistralai/Mistral-Large-3-675B-Instruct-2512-NVFP4": {

"name": "Mistral Large 3 675B Instruct",

"modalities": {

"input": ["text", "image"],

"output": ["text"]

}

},

"zai-org/GLM-4.7-FP8": {

"name": "GLM 4.7 FP8"

},

"moonshotai/Kimi-K2.6": {

"name": "Kimi K2.6",

"modalities": {

"input": ["text", "image"],

"output": ["text"]

}

}

}

}

}

}Extended example with custom agents:

{

"$schema": "https://opencode.ai/config.json",

"agent": {

"custom_reproducible": {

"mode": "primary",

"temperature": 0,

"top_p": 1.0,

"top_k": 1,

"min_p": 0.0,

"presence_penalty": 0.0,

"repetition_penalty": 1.0,

"seed": 42,

"prompt": "You are an expert software engineer."

},

"custom_qwen35_precise_coding": {

"mode": "primary",

"temperature": 0.6,

"top_p": 0.95,

"top_k": 20,

"min_p": 0.0,

"presence_penalty": 0.0,

"repetition_penalty": 1.0,

"prompt": "You are an expert software engineer."

}

},

"provider": {

"idun-llm": {

"npm": "@ai-sdk/openai-compatible",

"name": "IDUN LLM",

"options": {

"baseURL": "https://llm.hpc.ntnu.no/v1",

"apiKey": "{env:NTNU_API_KEY}",

"timeout": 600000

},

"models": {

"mistralai/Mistral-Large-3-675B-Instruct-2512-NVFP4": {

"name": "Mistral Large 3 675B Instruct",

"temperature": true,

"modalities": {

"input": ["text", "image"],

"output": ["text"]

}

},

"zai-org/GLM-4.7-FP8": {

"name": "GLM 4.7 FP8",

"temperature": true

},

"moonshotai/Kimi-K2.6": {

"name": "Kimi K2.6",

"temperature": true,

"modalities": {

"input": ["text", "image"],

"output": ["text"]

}

},

"Qwen/Qwen3.5-122B-A10B-FP8": {

"name": "Qwen/Qwen3.5-122B-A10B-FP8",

"temperature": true,

"modalities": {

"input": ["text", "image"],

"output": ["text"]

}

}

}

}

}

}Claude Code - configuration example

Install Claude Code. Instruction: https://code.claude.com/docs/en/quickstart.

I used this commands on Mac:

curl -fsSL https://claude.ai/install.sh | bash

echo 'export PATH="$HOME/.local/bin:$PATH"' >> ~/.zshrc && source ~/.zshrcIMPORTANT (to start without subscription): only first time, add this environment variable before starting claude:

export ANTHROPIC_AUTH_TOKEN="ollama"One model configuration

Create config file settings.json in the newly created .claude directory in the home directory .claude/settings.json with these lines:

{

"env": {

"ANTHROPIC_BASE_URL": "https://llm.hpc.ntnu.no",

"ANTHROPIC_AUTH_TOKEN": "sk-...YOUR_API_KEY....",

"API_TIMEOUT_MS": "3000000",

"CLAUDE_CODE_DISABLE_NONESSENTIAL_TRAFFIC": 1,

"ANTHROPIC_MODEL": "zai-org/GLM-4.7-FP8",

"ANTHROPIC_SMALL_FAST_MODEL": "zai-org/GLM-4.7-FP8",

"ANTHROPIC_DEFAULT_SONNET_MODEL": "zai-org/GLM-4.7-FP8",

"ANTHROPIC_DEFAULT_OPUS_MODEL": "zai-org/GLM-4.7-FP8",

"ANTHROPIC_DEFAULT_HAIKU_MODEL": "zai-org/GLM-4.7-FP8"

}

}Test. Create directory "garden" and change directory:

mkdir garden

cd gardenStart Claude Code:

claudeMulti-model - configuration:

{

"env": {

"ANTHROPIC_BASE_URL": "https://llm.hpc.ntnu.no/",

"ANTHROPIC_AUTH_TOKEN": "sk-...YOUR_API_KEY....",

"API_TIMEOUT_MS": "3000000",

"CLAUDE_CODE_DISABLE_NONESSENTIAL_TRAFFIC": 1,

"CLAUDE_CODE_DISABLE_EXPERIMENTAL_BETAS": "1",

"ANTHROPIC_MODEL": "zai-org/GLM-4.7-FP8",

"ANTHROPIC_DEFAULT_OPUS_MODEL": "moonshotai/Kimi-K2.6",

"ANTHROPIC_DEFAULT_SONNET_MODEL": "zai-org/GLM-4.7-FP8",

"ANTHROPIC_DEFAULT_HAIKU_MODEL": "mistralai/Devstral-Small-2-24B-Instruct-2512",

"ANTHROPIC_CUSTOM_MODEL_OPTION": "Qwen/Qwen3.5-122B-A10B-FP8"

}

}NOTE: In this example Claude Code can automatically switch between models. For example for /plan mode it will switch to configured OPUS_MODEL.

Mistral Vibe - configuration example

Add you API key to the shell environment befor starting vibe.

export OPENAI_API_KEY="your_api_key_here"Start vibe first time: type random API key, exit. This will create configuration file: ~/.vibe/config.toml. Edit config.toml

# Modify this line to change default model:

active_model = "zai-org/GLM-4.7-FP8"

# Add this lines to add provider and models

[[providers]]

name = "IDUN"

api_base = "https://llm.hpc.ntnu.no/"

api_key_env_var = "OPENAI_API_KEY"

api_style = "openai"

backend = "generic"

reasoning_field_name = "reasoning_content"

project_id = ""

region = ""

[[models]]

name = "zai-org/GLM-4.7-FP8"

provider = "IDUN"

alias = "zai-org/GLM-4.7-FP8"

input_price = 0.4

output_price = 2.0

thinking = "off"

auto_compact_threshold = 200000

[[models]]

name = "Qwen/Qwen3.5-122B-A10B-FP8"

provider = "IDUN"

alias = "Qwen/Qwen3.5-122B-A10B-FP8"

input_price = 0.4

output_price = 2.0

thinking = "off"

auto_compact_threshold = 200000Pi.dev (pi-mono)

Pi is a minimal terminal coding harness. Install Node.js befor installing Pi.

Node.js install: https://nodejs.org/en/download

Install Pi: Follow steps on pi.dev or git:

https://pi.dev

https://github.com/badlogic/pi-mono/

Create configuration file ~/.pi/agent/models.json

Example:

{

"providers": {

"NTNU IDUN HPC": {

"baseUrl": "https://llm.hpc.ntnu.no/",

"api": "openai-completions",

"apiKey": "sk-B....YOUR...API...KEY.....Q",

"models": [

{ "id": "google/gemma-4-31B-it",

"contextWindow": 128000,

"prompt": "You are an expert software engineer and technical architect."

},

{ "id": "mistralai/Devstral-Small-2-24B-Instruct-2512",

"contextWindow": 128000,

"temperature": 0.15,

"prompt": "You are an expert software engineer and technical architect."

},

{ "id": "Qwen/Qwen3.6-27B-FP8",

"contextWindow": 128000,

"temperature": 0.6,

"top_p": 0.95,

"top_k": 20,

"min_p": 0.0,

"presence_penalty": 0.0,

"repetition_penalty": 1.0,

"prompt": "You are an expert software engineer and technical architect."

},

{ "id": "zai-org/GLM-4.7-FP8",

"contextWindow": 128000,

"temperature": 1.0,

"top_p": 0.95,

"prompt": "You are an expert software engineer and technical architect."

}

]

}

}

}Test prompts:

Prompt - 3D lighthouse

Design a richly crafted voxel-art environment featuring a lighthouse on an island. There should be seagulls and boats. Include diverse vegetation on the island - and ensure the composition feels lively, colorful, and visually striking. Use any voxel or WebGL libraries you prefer, but deliver the entire project as a single, self-contained HTML file that I can open directly in web browser.

Prompt - 3D solar system:

Create single HTML file with a 3d rotating solar system to open in web browser. Show planet text information when mouse is ower the planet. It will be possible to zoom and rotate with mouse.

Prompt - 3D racing game:

Design and create a 3D highway racing game. The game must feature 3D graphics in any style you choose. A Start Screen that allows the user to select the car they will use. The user may select from three potential options as follows: A Sports Car, A Sedan, An option of your choosing. Each Car must have realistic limitations on its performance (e.g., top speed, acceleration, handling), which should also be displayed graphically on the car selection screen. Once the car is selected and the game starts, the player's car will begin driving on a busy highway. The player must navigate through dynamic traffic, swerving between lanes to avoid collisions. There MUST be a visible "nitrous oxide" or speed boost effect when used, as well as functional damage implementation for the player's car from collisions. If the player successfully navigates through the traffic for a set distance/time, the level repeats with increased difficulty (e.g., denser traffic, higher speeds, adverse weather). If the player's car sustains critical damage or crashes, the vehicle becomes uncontrollable (e.g., spins out, rolls over), and the screen returns to the home screen following a 2-second black screen. You may use any library for this implementation, but it must be contained within a single script, and be able to be opened and played in the Chrome browser.

Prompt - rotating 3D globe:

Create a single HTML file that sets up a basic Three.js scene with a rotating 3D globe. The globe should have high detail (64 segments), use a placeholder texture for the Earth's surface, and include ambient and directional lighting for realistic shading. Implement smooth rotation animation around the Y-axis, handle window resizing to maintain proper proportions, and use antialiasing for smoother edges. Explanation: Scene Setup: Initializes the scene, camera, and renderer with antialiasing. Sphere Geometry: Creates a high-detail sphere geometry (64 segments). Texture: Loads a placeholder texture using THREE. TextureLoader. Material & Mesh: Applies the texture to the sphere material and creates a mesh for the

globe. Lighting: Adds ambient and directional lights to enhance the scene's realism. Animation: Continuously rotates the globe around its Y-axis. Resize Handling : Adjusts the renderer size and camera aspect ratio when the window is resized.

Raspberry PI - Connect to LLM API from outside campus network.

Alternative to VPN is sshuttle command line tool. It allows to access remote network via SSH protocol.

NOTE 1: sshuttle requires root or sudo access.

NOTE 2: terminal with running sshuttle command should stay opened to keep connecting alive.

Install sshuttle on Raspberry PI:

sudo apt install sshuttleConnect for students:

sudo sshuttle -r USERNAME@login.stud.ntnu.no 129.241.121.16/32Connect for employees:

sudo sshuttle -r USERNAME@login.ansatt.ntnu.no 129.241.121.16/32129.241.121.16 is an IP address for llm.hpc.ntnu.no. So you will be able for reach https://llm.hpc.ntnu.no/… endpoints.